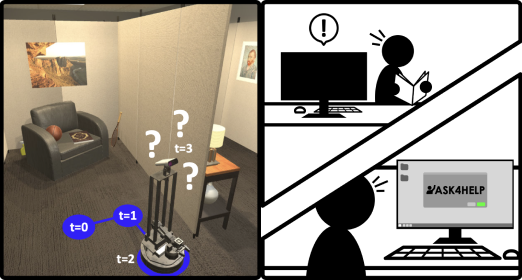

Embodied AI agents continue to become more capable every year with the advent of new models, environments, and benchmarks, but are still far away from being performant and reliable enough to be deployed in real, user-facing, applications. In this paper, we ask: can we bridge this gap by enabling agents to ask for assistance from an expert such as a human being? To this end, we propose the ASK4HELP policy that augments agents with the ability to request, and then use expert assistance. Ask4Help policies can be efficiently trained without modifying the original agent parameters and learn a desirable trade-off between task performance and the amount of requested help, thereby reducing the cost of querying the expert. We evaluate ASK4HELP on two different tasks : object goal navigation and room rearrangement and see substantial improvements in performance using minimal help. On object navigation, an agent that achieves a 52% success rate is raised to 86% with 13% help and for rearrangement, the state-of-the-art model with a 7% success rate is dramatically improved to 90.4% using 39% help. Human trials with ASK4HELP demonstrate the efficacy of our approach in practical scenarios. This code can be found on GitHub.

We consider a typical Embodied-AI model and augment it with the ability to query for expert help. Asking for too much help can be costly, or annoying if the expert is a human being. Hence, our goal is to achieve a good trade-off between asking for a minimal amount of help and maximizing the performance of the agent at its task. We propose a framework to learn a policy that decides when it is most effective to ask for help. We refer to this policy as the Ask4Help policy. Importantly, we learn this Ask4Help policy, without modifying the weights of the underlying E-AI model, allowing us to easily augment any off-the-shelf model with this capability. We also use reward embeddings to adapt to user preferences at test-time. More details can be found in our paper here.